A practical guide for mid-level managers navigating the most uncomfortable conversation in today’s workplace.

A few years ago, I watched a brigade commander brief his staff using an AI-generated operational summary. The analysis was clean, confident, and wrong in one critical way — it had no feel for the human terrain. The relationships on the ground, the fragile trust between two local leaders, the unspoken tension that anyone who’d spent time in that village would have sensed immediately. The AI couldn’t know what it didn’t know.

The commander almost acted on it. What stopped him wasn’t the data — it was a sergeant first class in the back of the room who said, quietly, “Sir, I don’t think that’s right.”

That moment stuck with me. Not because the AI failed, but because of what it revealed: the most dangerous leadership gap in an AI environment isn’t competence. It’s the silencing of human judgment.

Someone on your team is carrying that judgment right now. The question is whether your leadership culture is making it easier or harder for them to use it.

The Real Problem Isn’t the AI

Here’s what most organizations get wrong: they treat AI adoption as a technology problem when it’s actually a leadership problem.

The tools themselves are not what’s destabilizing your team. It’s the silence around them. It’s the all-hands meeting where leadership talks about “efficiency gains” without acknowledging what that phrase actually sounds like to someone with a mortgage. It’s the ambiguity about what skills matter now, what roles are safe, and whether the years someone spent building expertise still count for something.

As a mid-level manager, you probably didn’t write the AI strategy. You may not even fully understand it yet. But you control something far more powerful than the strategy, you control the day-to-day experience your team has while living inside it.

Three Fears Your Team Is Actually Carrying

Before you can lead through this, you need to name what’s really happening. Your people aren’t just worried about job loss (though they are). They’re carrying three distinct fears that often get collapsed into one:

Fear #1: I’ll be replaced. The raw, existential version. “The company will decide a machine can do my job cheaper.”

Fear #2: I’ll become irrelevant. More subtle and often more painful. “Even if I keep my job, I won’t matter the way I used to. My judgment, my experience, my craft none of it will be what makes me valuable.”

Fear #3: I’ll fall behind my peers. The competitive anxiety. “My colleagues are adapting faster than me. I’m already behind, and the gap is widening.”

Each of these requires a different response. Conflating them into a general “don’t worry, AI is just a tool” reassurance is the single biggest mistake managers make as it addresses none of them.

Four Moves You Can Make Right Now

1. Name it out loud before they do

Don’t wait for someone to bring up the elephant in the room. Raise it yourself, in a team meeting, with candor: “I know a lot of us are watching what’s happening with AI and wondering what it means for our work here. I want to talk about that directly.”

This one move does more than almost anything else. It signals that you’re not pretending, you’re not hiding information, and you’re not managing the narrative, you’re actually leading through it. Teams don’t need certainty from their managers; they need honesty.

2. Reframe contribution from task output to human judgment

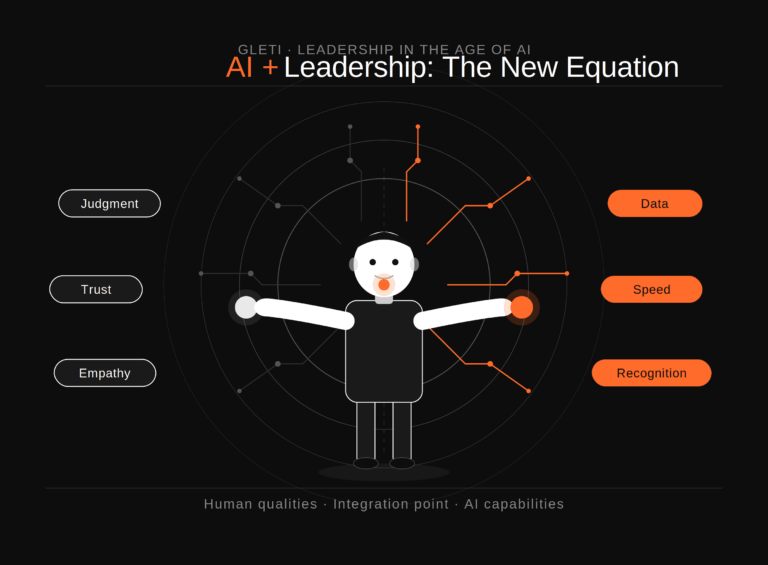

Start deliberately highlighting the things AI cannot do well: reading a room, building trust with a difficult stakeholder, making a call with incomplete information, knowing which problem is actually worth solving. Not as a consolation prize, but as a genuine shift in how you define excellent work.

When you give someone feedback or recognition, make it specific to these human qualities. “Your instinct to slow down that conversation before it escalated is exactly what we needed” lands very differently than “good job on the deliverable.”

This isn’t spin. It’s an accurate description of where human value is concentrating as AI absorbs more routine cognitive work.

3. Build deliberate upskilling into the team’s rhythm

You don’t need a formal L&D program to do this. Start small: dedicate fifteen minutes in a team meeting each month to a member sharing how they’ve used an AI tool to understand what worked, what didn’t, what surprised them. Create shared space for experimentation without the pressure of performance.

The goal is to move your team from passive observers of the AI shift to active participants in it. People who are learning alongside the technology feel far less threatened by it than people who are watching it happen to them.

4. Protect human judgment in high-stakes moments

This is the most important one. Be explicit with your team about which decisions require human deliberation and then actually protect that space. Don’t let AI-generated outputs become the default answer in consequential conversations just because they’re fast and confident-sounding.

When a recommendation comes from a tool, ask your team: What would we need to know to push back on this? What’s the AI not accounting for? This models the kind of critical engagement that will define strong leadership in the years ahead, and it sends a clear message: human judgment is not a formality here. It’s the point.

The Identity Shift This Moment Requires

Here’s the harder conversation underneath all of this.

The manager who thrived in 2015 was often someone who could efficiently coordinate tasks, track output, and keep projects moving. A meaningful portion of that work is now either automated or will be soon. That’s not a threat; it’s an invitation to evolve toward the version of management that was always more important: the human layer.

Your job now is less about managing workflows and more about being the person who helps your team do their best thinking, navigate ambiguity without panicking, hold onto their sense of professional identity through a period of real disruption, and bring their judgment to bear on problems that actually need it.

That’s a harder job. It’s also a more meaningful one.

The Challenge

Before your next team meeting, identify one decision your team deferred to an AI output without pushback. One moment where the tool’s confidence filled the space where human judgment should have been.

Then go back and open it up. Ask the question. Invite the sergeant in the back of the room to speak.

That’s not a small thing. In a world moving fast toward AI-assisted everything, the manager who protects the space for human judgment isn’t being old-fashioned.

They’re doing the most important leadership work of this era.

written by: Dr Andrew Campbell Director, Global Leadership Education and Training Institute